In recent months the onset of AI-based natural language processing tools and applications has taken the world by storm. One of the trending questions in this regard these days has been: what is prompt engineering?

ChatGPT, a service offered by Elon Musk-founded OpenAI, in particular, has been the one dominating most of the headlines. The basic premise of ChatGPT is to use machine learning and language learning models to generate answers in response to user prompts and do so in a fashion that almost resembles human interaction. As such prompt engineering plays a big role in this by providing specific instructions, constraints, and refinements under which the AI can more effectively provide solutions.

In short, prompt engineering involves wording your request in such a way that it enables the AI model to understand your intentions more accurately. When mastered, this can reduce the time it takes to extract the desired answer from tools like ChatGPT.

Also Read: Apple Unveils Pricing Of 15-Inch MacBook Air In India: Details Here

What is prompt engineering and why is it necessary?

As mentioned above, prompt engineering is the process of optimising prompts or instructions to achieve desired outputs. All large language models (LLM), including ChatGPT, Google Bard, Microsoft’s Bing Chat, and more, can make use of prompt engineering so that the best possible results are provided for user queries.

Also read: What Is ChatGPT: A Revolution or Revelation?

Several factors are taken into consideration while implementing it. These include things like wording, formatting, having relevant context with respect to the query, and more. The more complex your prompt, the more informative the result being assembled is. Most people are not able to fully utilise the capabilities of AI because their understanding of what prompts input is incomplete.

Few Examples…

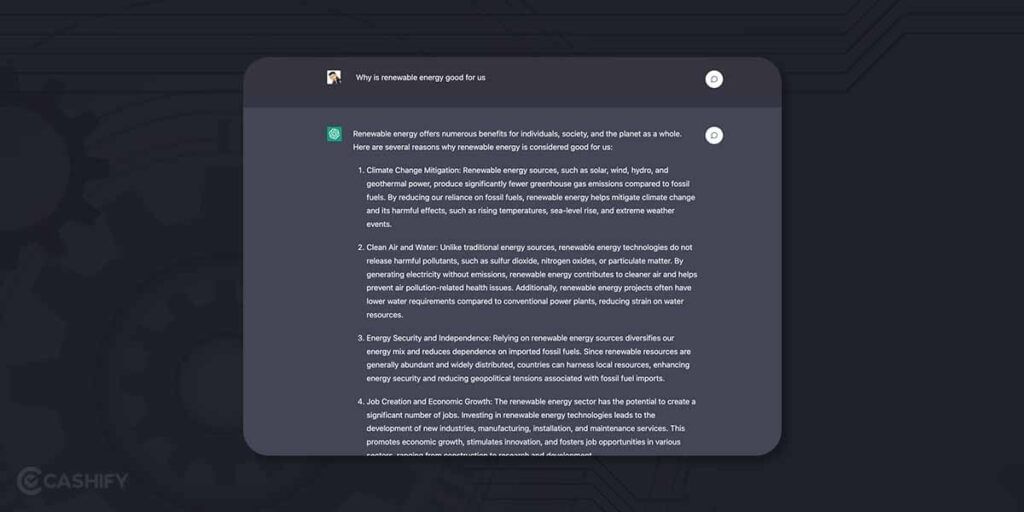

For example, if you are to ask ChatGPT, “Why is renewable energy good for us?” you would get a very long and drawn-out result that may not properly answer your query. However, if you are to write, “In 2-3 sentences, explain why renewable energy is beneficial for the environment”, the result will be a concise answer that can summarise the core matter of the query without introducing any fluff matter.

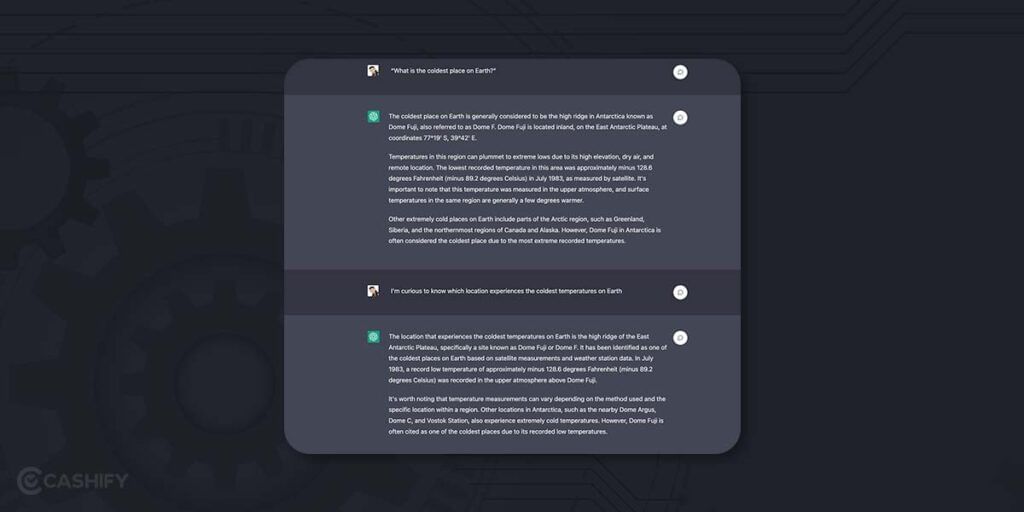

Another example of prompt engineering is various methods of asking the same query with different wordings. Queries like “What is the coldest place on Earth?” and “I’m curious to know which location experiences the coldest temperatures on Earth” are asking the same thing in a different manner, and the AI generates its prompt by considering the query’s framing. In this case, the first question will get an answer about Dome Fuji in Antar and a detailed explanation of why it is the coldest region. The second query will revolve more around specific locations and address the user’s curiosity.

Also read: We Tried The Best Free ChatGPT Alternatives – Mind Was Blown!

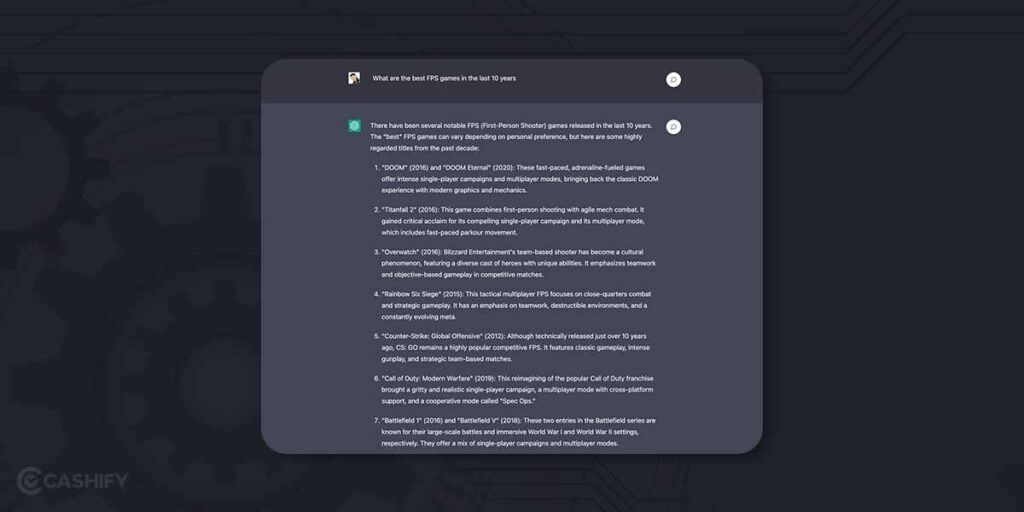

In addition to this, the AI’s ability to elicit responses based on previous results also uses prompt engineering. Asking a set of related questions will force ChatGPT to delve deeper and give more detailed answers. An example of this would be

“What are the best FPS games of the last 10 years?”

“Now shorten the list to titles launched only on PS4 and PS5”

“Give me a detailed summary of your top 5 recommendations”

Once you get the hang of it and are capable of attenuating your prompts in different styles, you will realise how good ChatGPT’s output actually is. Of course, prompt engineering is not limited to text-based tools and can be used in AI-generative image platforms like Dall-E and Midjourney.

Also read: ChatGPT Plus Subscription Started In India, Users Will Have To Pay This Much Every Month

The level of complexity is, of course, higher, but the method remains the same. For example, writing “Show me an image of a German Shepherd under a Palm tree” will get you what you are asking for, but you can be more creative with “Show me a German Shephard standing on the Mona Lisa at the full moon on a beach in Ibiza.” Prompts can be endless and are only limited by your creativity.

Also read: 12 Best AI (Artificial intelligence) App Of 2023

How can you learn Prompt Engineering?

The best way to properly understand prompt engineering is… you guessed it. Trial and error. The more you experiment with the type of prompts on ChatGPT or any other LLM, the higher your chances of cracking the code to effective, prompt generation. OpenAI recommends using the latest “text-DaVinci-003” and “code-DaVinci-002” models for text and code prompts, respectively.

That being said, here are a few ways which will help you in the task.

- Use # and “ for a clear separation of instruction and context on your prompt. This will help the AI better understand your request.

- Check out articles on how others have approached it. See what parameters were put out in each of the prompts and how many queries did it take to reach the output required.

- It goes without saying that you need to be as descriptive with your prompts as you possibly can

- A rule of thumb is to provide some examples of the query you are generating to better help ChatGPT understand your request. Don’t just ask, “ Suggest a smartphone with the best display”. Instead, you should find a relevant example and write, “Analyze the below-mentioned paragraph about best smartphones and suggest the devices with the best display”, and paste the excerpt below.

Also read: Is Conversational AI Going To Be A Game Changer In 2023?

- It is better to put in a prompt describing what you want ChatGPT to do rather than what you don’t want it to do.

Controlling The Response

The randomness and creativity of any response on ChatGPT are controlled by a parameter called temperature. It is a value assigned between 0 and 1 wherein the more creative answers have a temperature near one, and the more data-driven and factual responses are near 0. Learn how you can use it to get responses better suited to your need. For now, the feature is limited only OpenAI playground.

Also read: Spotify X OpenAI: Everything To Know About Spotify AI DJ

Summary

It is quite easy to find online tutorials, blog posts, research papers, and community forums that go into further detail about the new engineering techniques and best practices. Reading up on them would go a long way to properly understand and utilise the wonders of AI-based chatbots. Of course, AI and NLP are always getting new innovations and resources being added from time to time. Therefore, it’s advisable to conduct further research to find the most up-to-date and relevant learning opportunities.

Searching for the best place for mobile repair and to sell old mobile phone? Cashify is the perfect place that offers phone repair at an affordable rate with convenient services.